Learning Utility AI by Building It in Unreal Engine

Before I even get into the plugin side of this, I think the more interesting question is:

why does Utility AI exist in the first place?

Because it did not show up just to sound clever.

It showed up because AI gets awkward really quickly once a character has to balance multiple needs at the same time.

Not just:

- can I attack?

- can I run?

- can I reload?

But:

- should I attack right now?

- is reloading more important than pushing?

- how dangerous is this situation compared to how valuable the target is?

- what actually matters most in this exact moment?

That is the part that always pulled me in.

I have always liked AI systems that feel smart without being completely overengineered.

You know that sweet spot where behavior feels dynamic, but the code still feels like something you can come back to later without regretting your life choices?

That was basically what pushed me toward Utility AI.

And honestly, a huge reason this idea clicks so easily is because we have already seen it in games for years.

Utility AI did not start in games, but games gave it a great home

The word utility comes from much older decision theory. At a very basic level, it is about asking:

How much is this option worth to me right now?

That question fits games almost suspiciously well.

Because game AI is constantly juggling competing pressures:

- survival vs aggression,

- hunger vs comfort,

- safety vs opportunity,

- short-term gain vs long-term stability.

And at some point, hard yes/no logic starts feeling too stiff for that.

That is where utility-style systems shine. Instead of only checking whether an action is valid, they try to measure how desirable that action is in the current situation.

That shift sounds tiny.

It really is not.

It changes the whole way you think about behavior.

Why The Sims is such a good example

When people talk about Utility AI in games, The Sims comes up all the time for a reason.

Not because it invented utility theory.

But because it made the idea incredibly easy to understand.

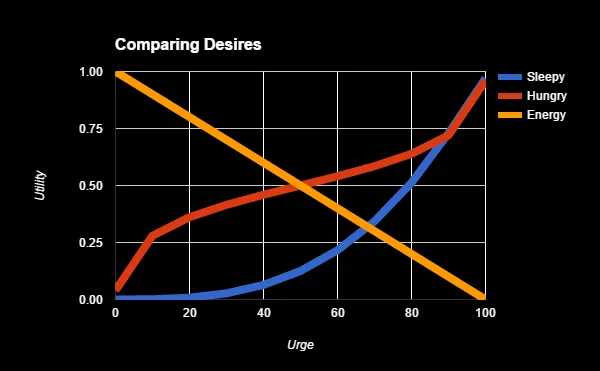

A Sim has needs. Hunger. Energy. Fun. Social. Hygiene. Bladder. Comfort. The world around that Sim is full of possible ways to satisfy those needs. Beds help with energy. Fridges help with hunger. TVs help with fun. Toilets help with bladder.

So the decision-making stops being:

is this action allowed?

And starts becoming:

out of everything around me, what helps the most right now?

That is such a clean mental model.

And honestly, I think that is why Utility AI has stayed so appealing for so long.

You can explain it with math, curves, scoring, weighting, and response functions.

Or you can explain it like this:

a Sim is exhausted, hungry, and miserable, so it picks the thing that best relieves the most urgent pressure.

That just makes immediate sense.

That was a big hook for me.

And then The Sims 3 made it even more interesting

What makes the whole thing even cooler is that The Sims 3 pushed that style of autonomy further with personality and trait-driven behavior.

So now it was not only about generic needs anymore. It also became about who that specific Sim is.

That matters a lot.

Because two agents can look at the same world state and still want different things.

One character might be more reckless. Another might value comfort earlier. Another might care more about social needs, safety, or chaos.

That is where Utility AI stops feeling like a dry scoring system and starts feeling expressive.

That is also where it started clicking harder for me.

Because I was not just looking at an AI technique anymore. I was looking at a way to author preference.

That was the real hook for me

Once I started looking at Utility AI through that lens, it stopped sounding like an abstract AI buzzword.

It became a really practical answer to a very common problem:

How do I make an agent choose between several reasonable options without hardcoding every possible priority chain?

That question led me straight into building my own version of it in Unreal Engine.

And that eventually became NsSynapse.

But honestly, the journey was not some clean “I discovered a perfect system and everything clicked instantly” kind of experience.

It was messier than that.

Way messier.

The point where traditional logic started annoying me

I think the biggest thing that started bothering me was how often AI logic felt too binary.

Can the AI attack?

Yes or no.

Can it reload?

Yes or no.

Can it retreat?

Yes or no.

That works for a while. It really does. But once you want an agent to deal with competing priorities, the whole thing starts feeling awkward.

Because real decisions usually are not binary.

Sometimes the AI can attack… but probably should not.

Sometimes retreating is smart… but maybe not yet.

Sometimes reloading is important… but definitely not while getting blasted in the open.

That was the big mental shift for me.

Instead of asking:

“Can this action happen?”

I started asking:

“How much sense does this action make right now?”

That tiny change completely changed how I thought about AI.

Utility AI clicked because it felt more… human?

That might sound dramatic, but that was honestly my reaction.

Utility AI felt way more natural because it let me think in terms of desirability instead of strict permission.

Not:

- can I attack?

- can I flee?

- can I reload?

But:

- how desirable is attacking right now?

- how desirable is fleeing right now?

- how desirable is reloading right now?

And once you frame decisions like that, things get really interesting.

An action can be technically available, but still score poorly.

Another action can become more attractive because the situation changed just enough.

Nothing has to be hard-switched unless you want it to be.

That felt really good.

This was the first moment where Utility AI stopped sounding like a neat concept and started feeling like something I genuinely wanted to build around.

Then came the reality check

Here is the part nobody really tells you when Utility AI first sounds elegant:

scoring actions is easy.

scoring actions well is not.

That was probably the first real wall I hit.

At the start, the structure feels almost too simple:

- define actions,

- define considerations,

- calculate scores,

- pick the best one.

Cool. Nice. Clean.

But then you run it.

And suddenly the AI does something technically valid… but completely weird.

Maybe it keeps bouncing between choices.

Maybe it values survival way too late.

Maybe it becomes way too aggressive.

Maybe one action wins so often that everything else may as well not exist.

That was when it hit me:

Utility AI does not magically make your AI smart. It just makes your priorities visible.

And honestly, I love that now.

At the time though? Super annoying.

Because it meant the problem was not:

the system is broken

The problem was:

my evaluation was not good enough yet

That is a very different problem. And weirdly, it is a much more interesting one.

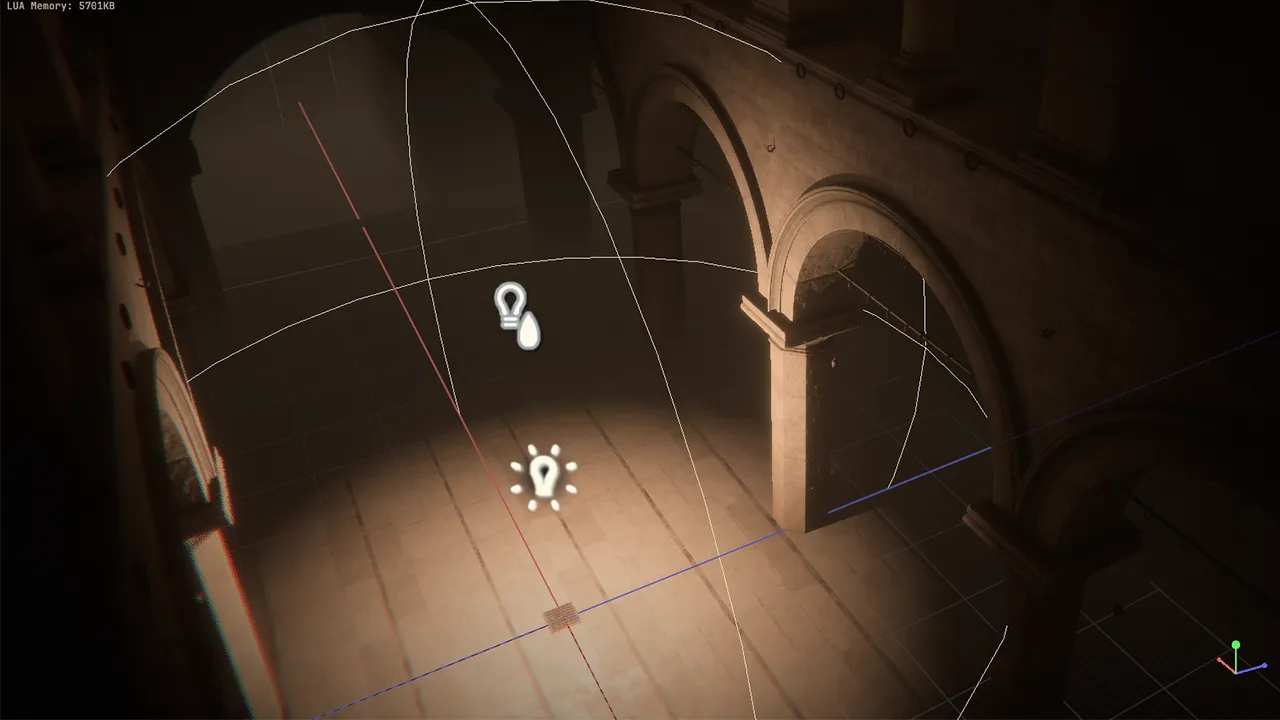

Building NsSynapse

At some point, the experiments stopped feeling like one-off prototypes and started feeling like they wanted to become a proper tool.

That is where NsSynapse came from.

I wanted a Utility AI setup in Unreal that felt:

- modular,

- readable,

- easy to extend,

- and not overly academic.

I did not want a black box. I wanted something that made sense when you looked at it.

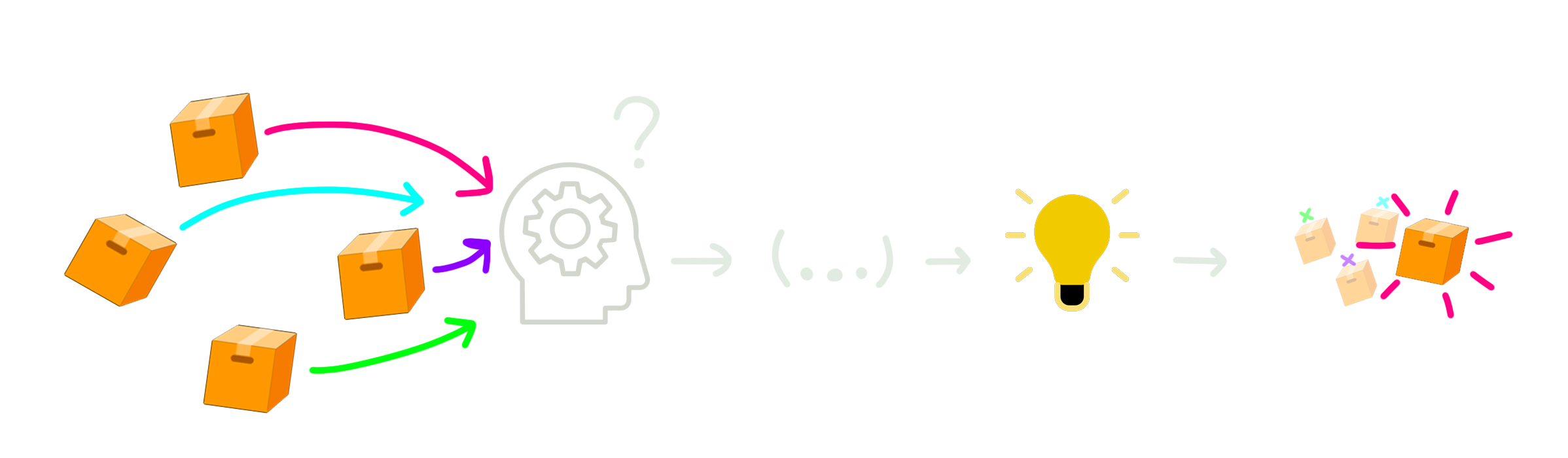

So the core idea stayed really straightforward:

- Gather the actions available to the agent

- Evaluate those actions through considerations

- Generate utility scores

- Choose the best result

Simple loop. Big impact.

What I liked about that structure is that it scales nicely.

If I want a new action, I do not need to rebuild the AI.

I just define how that action should be evaluated.

If I want smarter behavior, I do not need to rewrite everything.

I just improve the considerations, scoring, or response curves.

That felt way healthier than the usual “touch one thing, accidentally break six others” workflow.

The part that surprised me most: Utility AI is half engineering, half taste

This was probably my favorite discovery in the whole process.

On paper, Utility AI sounds very mathematical. Values go in, scores come out, highest wins.

And yes, technically that is what happens.

But in practice, tuning Utility AI feels weirdly creative.

Because numbers by themselves do not mean much.

A health value is just a value.

Distance is just a value.

Ammo count is just a value.

What matters is:

how much should the AI care about that value?

when should it start caring more?

should urgency ramp gradually, or spike hard near a threshold?

That is where response curves and score shaping became super interesting to me.

Because that is the difference between:

- an AI that feels hesitant,

- an AI that feels aggressive,

- an AI that feels cautious,

- and an AI that feels like it has some kind of personality.

That was such a cool moment.

I was not just programming choices anymore. I was shaping attitude.

And that made the system a lot more fun to build.

The annoying middle phase

There was definitely a stretch where things felt worse before they felt better.

I think every system has that phase where you start seeing its strengths and weaknesses at the same time.

For me, the biggest danger with Utility AI became obvious pretty quickly:

it is very easy to overcomplicate it

Because once everything is score-based, it becomes incredibly tempting to keep adding more signals.

Another consideration.

Another multiplier.

Another tweak.

Another override that “just helps this one case.”

And to be fair, each addition often makes sense in isolation.

But stack enough of them together and suddenly the system starts getting harder to reason about.

Then debugging changes from:

“which branch failed?”

to:

“which combination of weighted inputs created this weird score?”

That was one of the biggest lessons I had to learn while building this plugin.

Not every action needs ten considerations.

Not every score needs to be clever.

Not every edge case deserves a special rule.

Sometimes simpler scoring gives better behavior because it stays understandable.

That was a huge mindset correction for me.

More nuance does not automatically mean better AI.

That one hurt a little. But it was useful.

The moment it started feeling good

The payoff came when the decisions stopped feeling forced.

That is the best way I can describe it.

The AI started doing things that felt reasonable because of the situation, not because I had explicitly hardcoded some exact path for that moment.

Attack when pressure is low enough.

Retreat when survival suddenly matters more.

Reload when there is breathing room.

Shift priorities naturally when the world changes.

That was the point where Utility AI really earned its place for me.

Because it handled competing needs in a way that felt clean.

Not random.

Not magical.

Just… weighted.

And that is honestly what I wanted all along.

I did not need fake intelligence. I wanted believable prioritization.

Utility AI was the first approach that really made that feel natural.

What NsSynapse taught me

Looking back, I think the biggest lesson was this:

good AI is often less about complexity and more about good evaluation

That sounds obvious now, but I definitely did not start there.

Like a lot of people, I used to think smarter behavior naturally meant more elaborate systems. More transitions. More conditions. More layers.

But building this plugin really hammered home the opposite idea.

A surprisingly simple loop can produce pretty interesting behavior if the scoring model is solid.

And if the scoring model is weak, no amount of architectural elegance is going to save it.

That was such a useful lesson, not just for AI, but for systems design in general.

Because it reminded me that:

- simple does not mean weak,

- modular does not mean shallow,

- and “smart” behavior often comes from good priorities, not big complexity.

What I still like about it now

I think what I appreciate most is that Utility AI changed how I think about behavior.

It made me stop designing AI like a sequence of forced answers.

Instead, it made me think in terms of:

- pressure,

- context,

- desirability,

- tradeoffs,

- and timing.

That feels a lot closer to how interesting decisions actually work in games.

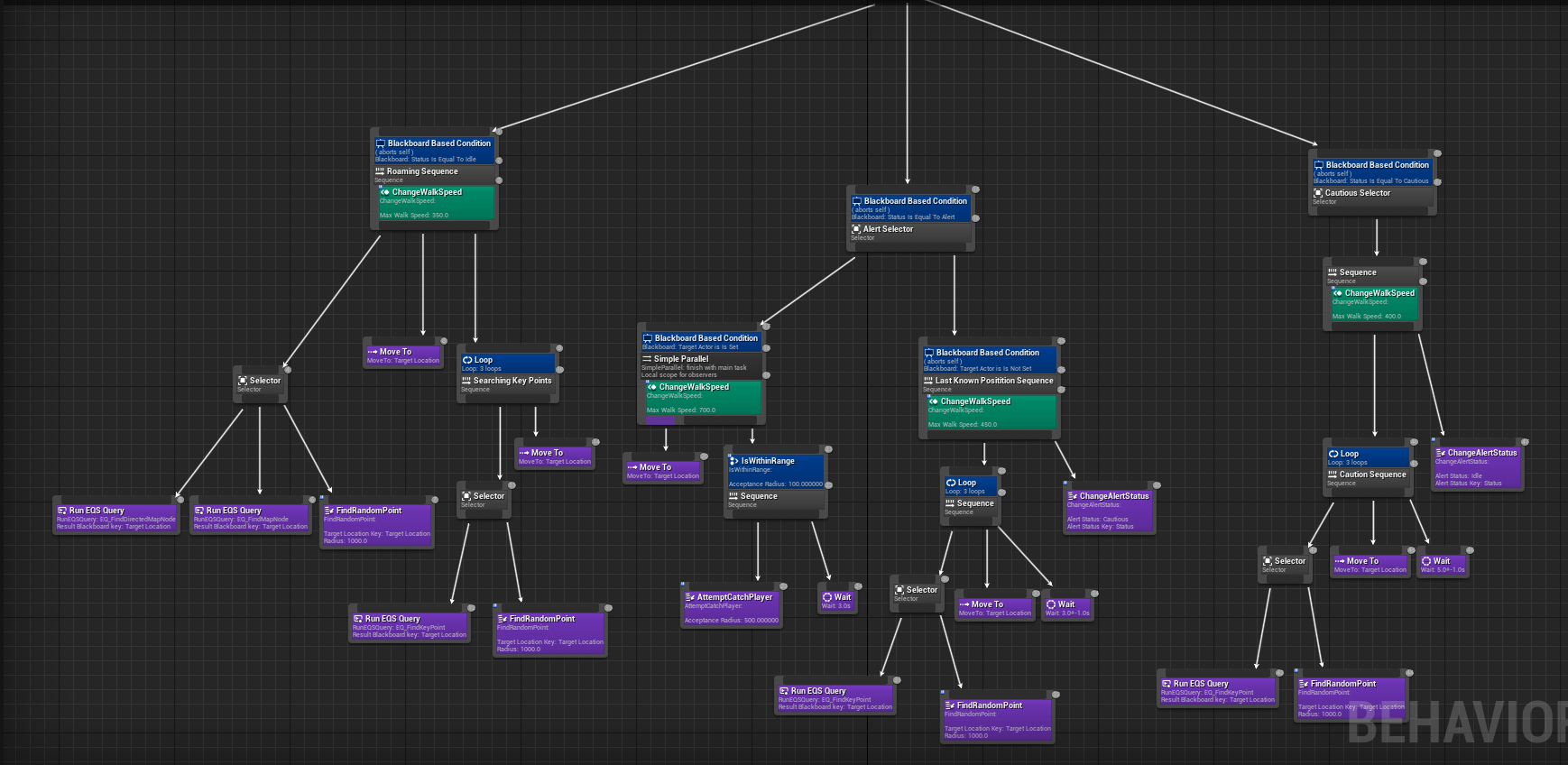

And no, I do not think Utility AI replaces everything. Different problems need different tools. Sometimes a behavior tree is still exactly the right answer. Sometimes a simple finite state machine is enough.

But Utility AI has a really nice place in the toolbox, especially when I want decisions to feel fluid and layered.

That is the part that stuck with me.

Final thoughts

NsSynapse started as curiosity.

Then it became an experiment.

Then a plugin.

Then one of those projects that quietly changes how you approach future work.

And I think that is why I like it so much.

Not because Utility AI is some magical answer to everything.

But because building it forced me to think more honestly about decision-making.

It made me question rigid logic.

It made me care more about tuning.

It made me pay attention to what the AI should value, and when.

And somewhere in that process, I stopped just writing “AI behavior” and started thinking more about authored intent.

That shift alone made the whole journey worth it.

If there is one takeaway I would leave with, it is this:

Utility AI gets really interesting when you stop asking “what can the AI do?” and start asking “what matters most right now?”

That question led me down the path of building NsSynapse.

And honestly, I am glad it did.